- Definition

- Common Data Mapping Techniques

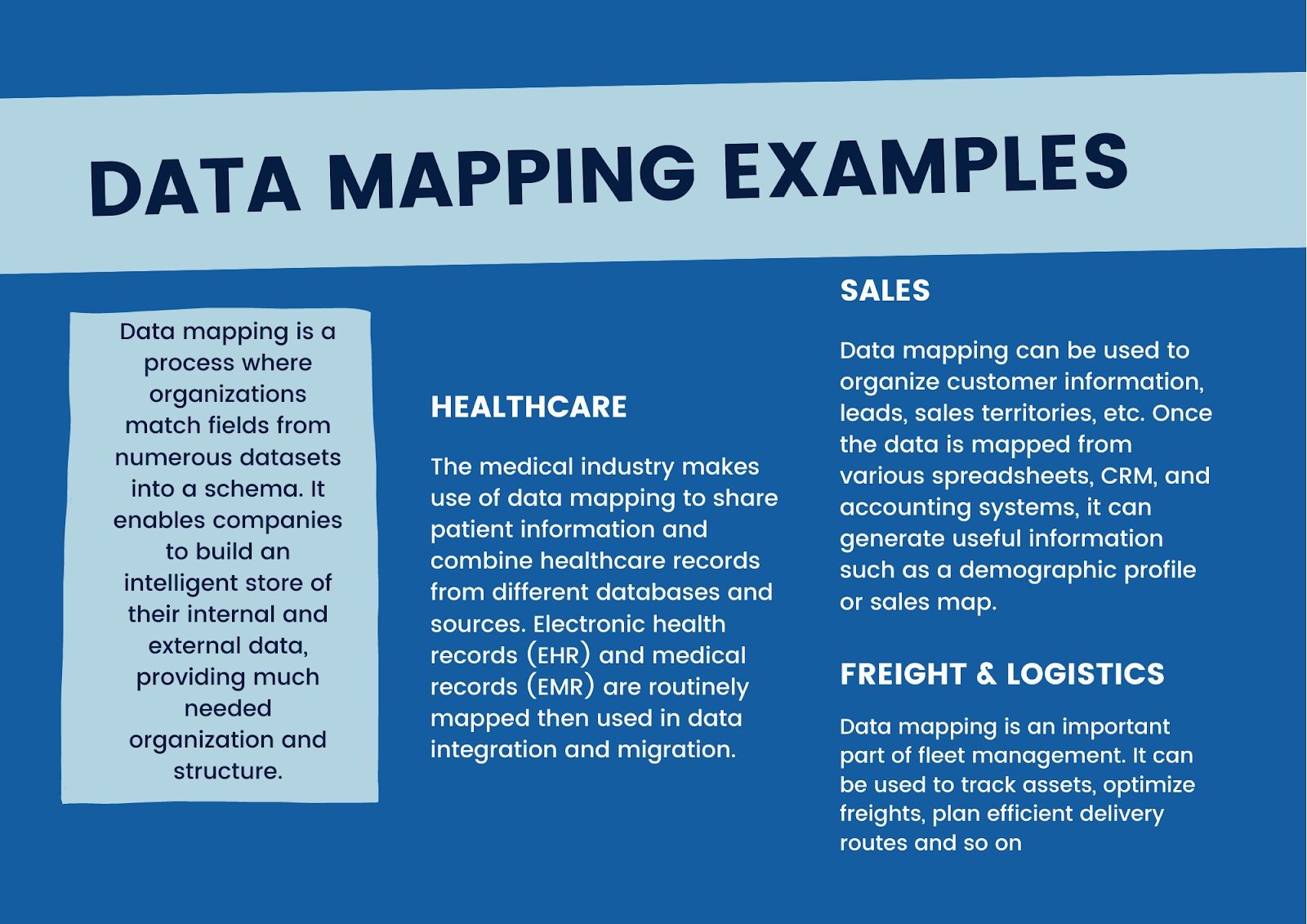

- Examples

- Data Management

- Sensitive Data Requirement

- Best Practices

Data is the lifeblood of the modern organization, and the number of data sources companies generate and use is growing by the day. Data takes many shapes and forms, meaning it can be difficult to structure and organize it.

In fact, much of today’s enterprise data exists in silos, segmented by departments, geographies and even individuals. This often creates inconsistencies and misalignment within the organization, which can increase costs and impact the bottom line.

To harness the power of data for better business outcomes, creating a complete, centralized picture of it is essential. This is why companies are increasingly turning to data mapping.

Data mapping aka master data management (MDM)

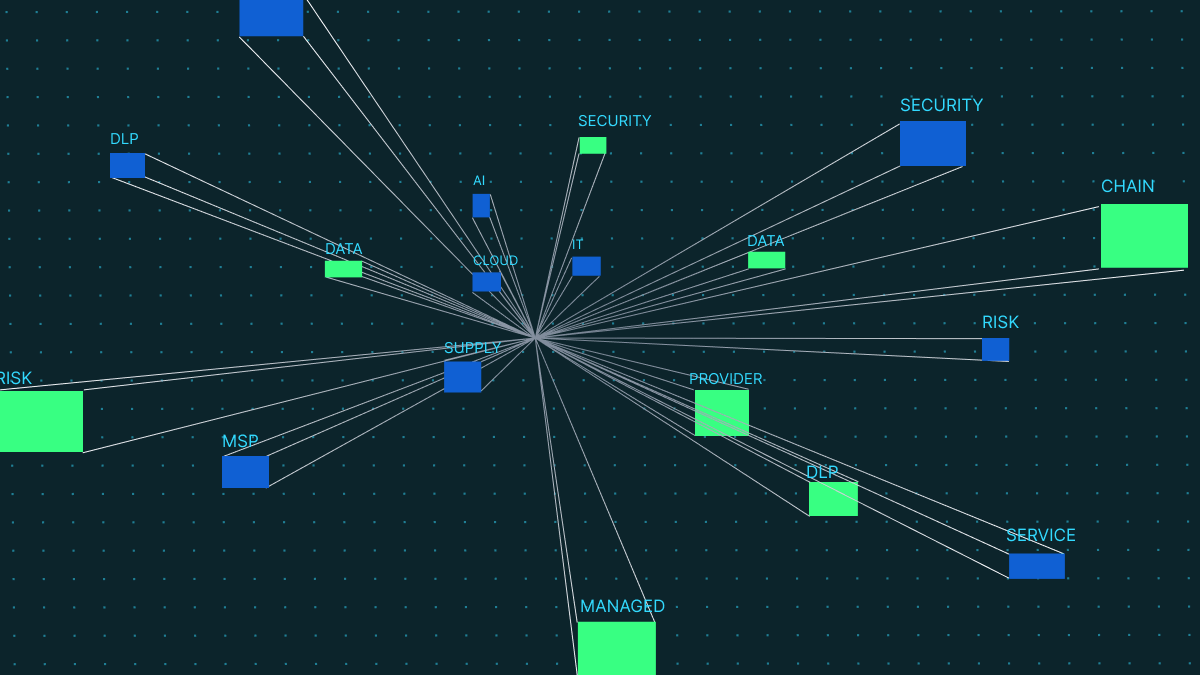

Data Mapping is a core component of a Master Data Management where organizations match fields from numerous datasets into a schema – by which we mean a centralized, unified database. It enables companies to build an intelligent store of their internal and external data, providing much needed organization and structure to the masses of information they create.

Data mapping is essential for effectively managing and processing data – particularly in cases where organizations process sensitive data, which is subject to compliance regulations. It’s commonly used for:

- Data transformation or data mediation

- Identifying relationships as part of data lineage

- Consolidating databases and weeding out redundancies

- Finding hidden sensitive data as part of data masking

Without data mapping, it’s far too easy for organizations to lose track of their data, which can hurt productivity, as well as increase the likelihood of a costly data breach. However, not all data mapping strategies are fit for purpose.

Organizations today use a wealth of applications, collaboration tools and software to process and generate data. Just as their systems have become more complex and intricate, so too must data mapping. This means that organizations need to think about modernizing their data mapping strategy, by turning to tools like automation.

What are the steps of data mapping?

Data mapping usually takes the form of a 6 step process, as outlined below:

- Define the data

- Identify the data to be moved, including tables, fields, format

- The data transfer frequency should also be defined as part of data integration

- Mapping

- This is where source fields are match to the destination fields

- Data transformation

- This step is necessary for fields that require a transformation

- The formula or rule is coded to process the field

- Validation

- The output is tested is using a test system and sample data

- Adjustments are made as needed prior to deployment

- Deployment

- Once the system has been tested, the migration or go-live date is scheduled

- Maintenance

- Constant updates are required in data integration cases

- New sources may be added or changed, or destination requirements evolve over time.

What are the Common Data Mapping Techniques?

There are three main techniques for mapping data.

Manual data mapping: IT personnel manually map or hand-code data sources to the target schema, without automating the process.

Schema mapping: A bridge between manual and automated data mapping, it involves the use of data mapping software to establish the relationship between the data source and the schema. Once the connections are made, the results are checked by human eyes and manual adjustments are made.

Automated data mapping: This uses specialized data mapping tools to automate the whole process. Such tools are designed to be simple to use even for lay persons, with intuitive UI and drag-and-drop functions.

The role of data mapping in data management

Data mapping is a critical component of proper data management. Data that is not properly mapped may end up being corrupted, unusable, or lead to integrity issues. Quality is key when conducting data migrations, integrations and transformations.

Data migration

This is the process of moving data from one system to another. Data mapping plots the source fields and matches them to the right destination fields, ensuring data integrity during the transfer process.

Data integration

This is the regular movement of data from one system to another. Mapping is essential since unmapped data can cause systemic issues and loss of data integrity over time, especially as new data regularly comes in.

Data transformation

This is when data is converted into a certain format in the process of moving. For example, the data can be aggregated or changed into a different type. If the data is to be transformed, data mapping ensures the data is processed into the correct format in its destination.

Data mapping and sensitive data

Data mapping also allows users to discover sensitive data, and then classify, categorize and manage it. For compliance with numerous privacy laws, data mapping is vital to attaining a clear view of personal data within the enterprise.

In fact, the EU’s General Data Protection Regulation (GDPR) requires organizations to map their data flows in order to assess privacy risks. Creating a data flow map is required under Article 30, and is the first step of the Data Protection Impact Assessment process.

Data mapping best practices

Below are some tips to help you get started with data mapping.

Implement deep data discovery: Cover all possible data including regulated, sensitive and high-risk data. Make sure to map all possible sources to uncover possible dark data.

Use scan-based discovery: Manual mapping rests on the assumption that users have a perfect overview of all their data, which can lead to data gaps and inaccurate mapping. An automated scan-based survey can generate a more accurate and reliable map, or validate manual methods.

Make use of data loss protection tools: Data loss prevention (DLP) is an excellent technical measure to help organizations to map, classify and control sensitive data. It works by monitoring personal data as it moves through the organization’s infrastructure, including endpoints and cloud-based applications. This greatly reduces the risk of data loss, theft or exposure, while increasing enterprise visibility.