Authors: Yasir Ali (Founder, Polymer DLP) & Aaron Bray (Founder, Phylum)

Software supply chain is at risk with LLM adoption

Developers now have access to powerful generative AI models that assist in writing code, automating mundane tasks, and improving productivity. While this technology holds tremendous promise, it also brings to light a pressing concern—the potential risks associated with using AI-generated code in software development.

A recent Stanford study found that experienced developers using Codex (which powers Copilot, Github’s AI assistant for code writing Copilot) were more likely to write insecure code that added vulnerabilities.

In this blog, we will outline three main risk categories related to large language models (LLMs) within software development, explore recent examples of malware being inserted into codebases via LLMs, and delve into research by security vendors that shed light on these risks. We will also discuss the upcoming launch of Polymer’s DLP with software supply chain scanner in combination with Phylum. This release intends to secure a large number of risks associated with using LLMs in enterprise software development life cycles.

According to OWASP Top 10 for LLM, software supply chain-related items are the biggest threat vectors on the list. Here is what OWASP flags as risks:

- Applications using LLM inputs could be used to send malicious payloads

- Prompt injection from malicious payloads from LLMs

- Denial of service via client chatbots

- Supply chain risk due to pre-trained models, crowd-sourced data, and extensions

- Data leakage

- Insecure output handling

- Over reliance

- Excessive agency

- Training data poisoning

- Permission issues

Key risks in the SDLC

The inclusion of LLMs in the software development process has created a host of new security and legal liability concerns that modern organizations are largely ill-equipped to defend against. First and foremost, it is important to recognize what LLMs do: they generate outputs based on a combination of inputs (provided through prompts) and statistical measures derived from their weights along with data they have been trained on.

While the use of generative AI in the world of software development is still relatively nascent and far from fully explored, issues surrounding trustability and legalities associated with code generated via LLM have already begun to surface. In looking at research, Endor Labs’ report examines the impact of LLMs on application security. The following are the risk factors outlined in using LLMs for the software development life cycle:

Security issues

- Malware insertion from LLM-generated code in routines

- Secrets and sensitive data leakage (PII/PHI)

- Vulnerable and suspicious libraries/packages via LLMs

- Legal liability

While systematic studies of LLM-generated source code and software are very early, a number of concerns have already begun to emerge.

Among the bigger problems uncovered thus far is the issue of so-called Package Hallucinations. This is a phenomenon where a source code generating LLM, such as ChatGPT or GitHub’s Copilot, may either suggest packages or generate source code that incorporates packages as dependencies that either don’t exist (leaving the door open to future compromise when an attacker takes ownership of hallucinated, non-existent packages) or may be overtly malicious and lead to direct compromise. The latter has become an ever-increasing concern.

This manifests as a byproduct of general LLM operation. While LLMs are powerful tools, just by nature of how they operate (based on statistical probabilities and training data), they have a tendency to hallucinate or potentially generate responses or output that does not align with reality.

Another security concern that emerges from use of software generated by AI is the almost-certain propagation of unintentional software defects. An LLM’s output is, to a great degree, influenced by its inputs.

While some projects with high-quality standards such as the Linux kernel may have relatively few bugs compared to the average codebase, most industry studies show an average of 20-30 bugs per 1,000 lines of code written in a given project. Multiply this by the probable billions of lines of code that modern LLMs have been trained on and the odds of having real security issues inadvertently introduced becomes absolutely massive.

Legal concerns

Who owns the sourcecode generated by AI? What if the code is sourced from open source packages that do not allow commercial usage?

On top of the major (and very real) security concerns that now exist as a direct result of LLM use, there are also emerging legal challenges regarding the use of LLM outputs. In addition to the concerns around more derivative outputs highlighted in lawsuits relating to image generation, there are also more direct concerns relating specifically to source code. Not the least of which being the tendency of some tools, like GitHub’s Copilot, to occasionally directly copy blocks of code wholesale potentially without attribution or proper licensing. To make matters worse, preventing these issues is treated by such tools as an exercise left to the reader.

LLM concerns & the software supply chain

While these issues certainly have a direct impact on internal code, there is also an indirect impact to third-party packages and libraries. Even if an organization totally prevents use of generative AI in its internal products, the risks outlined above also impact code that is indirectly consumed through open source libraries, which are unlikely to be as stringent in screening contributions.

Additionally, these tools enable attackers that have increasingly leveraged automation to conduct operations through the open source ecosystem and third-party packages. These sorts of attacks, which have already crippled package registries, can leverage generative tooling to scale efforts even more efficiently.

Supply chain risks have increased dramatically

The supply chain for LLM-based methods can be susceptible to vulnerabilities. The vulnerabilities compromise the integrity of various components including training data, machine learning (ML) models, and deployment platforms. This can result in biased outcomes, security breaches, or even complete system failures. Traditionally, vulnerability concerns were centered around software components, but with the rise in popularity of transfer learning, the reuse of pre-trained models, and crowdsourced data, these issues have extended to AI systems.

Public LLMs, such as OpenGPT, are especially at risk with extension plugins being a notable area of vulnerability. These factors make it crucial to address supply chain security comprehensively in the context of AI to safeguard against potential threats and maintain the reliability of AI-powered systems.

Exhibit 1: Supply chain vulnerability causes OpenAI breach

The OpenAI breach resulted from a supply chain vulnerability within the Redis open-source library which is a widely used in-memory data store, including in OpenAI’s ChatGPT tool. The vulnerability in Redis allowed an unauthorized attacker to gain access to and alter data stored in the Redis database. This included sensitive user information like chat history titles and payment details.

To exploit the vulnerability, the attacker sent a specially crafted request to the OpenAI API, prompting the server to query the Redis database for unauthorized data access. Subsequently, the attacker was able to manipulate the retrieved data as desired.

Exhibit 2: Supply chain attacks continue to escalate

In addition to more traditional vulnerability-driven issues, real supply chain attacks—more inline with the now-infamous Solarwinds breach—have escalated dramatically. These attacks frequently target software developers and CI/CD infrastructure, with the goal of stealing credentials to production data or otherwise furthering accesses. In Q1 and again in Q2 of 2023, a staggering number of malicious and spam packages were published, some of which have since been attributed to nation state threat actors.

Exhibit 3: Secrets & sensitive data insertion in code

According to a recent study, over 100k public Github repositories have exposed Secrets. This is an issue that predates widespread LLM adoption, but has become urgent due to the growing reliance of developers on auto-generated codebases.

Some of the more common entities that are found in public repositories are IP addresses, customer PHI data, login information. PII and PHI data is also found within comments or hard coded in procedures directly.

Securing the SDLC with Polymer & Phylum

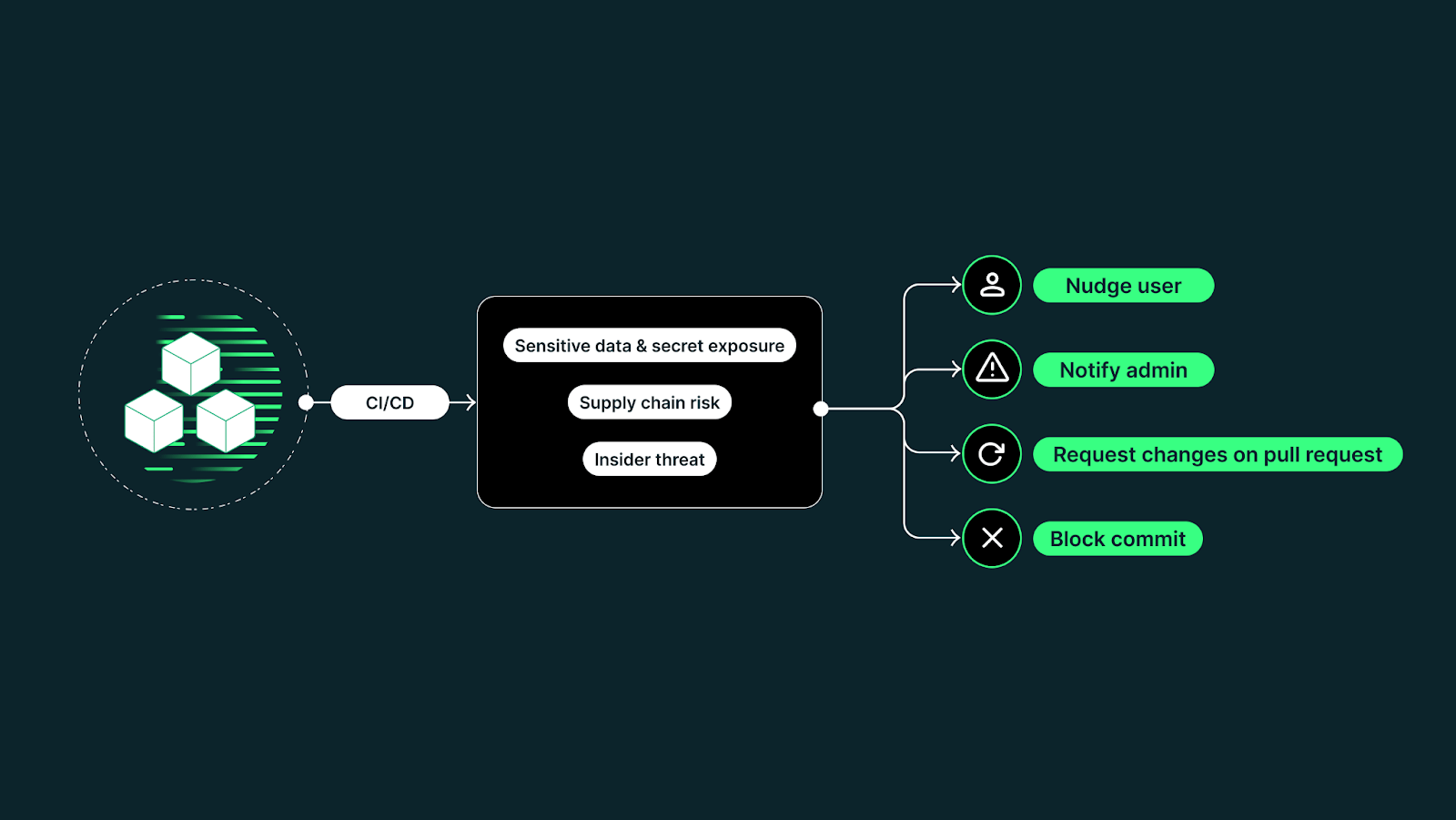

Polymer and Phylum combine the best-in-class DLP and software supply chain management for an exciting release. The joint solution is designed to address the risks discussed above and can be deployed under 15 mins.

Software supply chain risks are real and pressing. It is imperative that organizations take a comprehensive approach that protects against the full spectrum of risks that exist within the software supply chain. This means the addressing full spectrum of threats—from data leakage through copying proprietary data out (to craft prompts), to blindly copying the output of generative AI solutions into in-house developed software solutions, to addressing the risks posed by the use of LLMs in third-party code—is absolutely imperative.

Polymer and Phylum together cover 6 out of the 10 threat vectors identified by OWASP.

Polymer and Phylum represent the first solution in the market to span this full area of concerns. Together, they leverage a powerful DLP capability in the context of CI/CD. They address internal concerns of generative AI use with AI-driven software supply chain capabilities that proactively identify and block the third-party risks presented by these tools.

Please reach out to the Polymer or Phylum team to learn more.