Data loss prevention (DLP) is meant to do exactly what it says on the tin: prevent data loss. For today’s enterprises, this is a huge concern. Who doesn’t want to protect their reputation, assets, and bottom line from the fallout of a data breach or compliance fine? In line with this, the DLP market is soaring. Annual sales reached $1.2 billion in 2020 and new vendors are popping up by the month.

And yet, data breaches still happen on a regular basis. Something is going amiss. We know that enterprises aren’t using DLP, so the problem must be that DLP is failing to help them where they need it. Why might this be? Well, let’s take a quick look at the evolution of DLP in the enterprise.

Data loss prevention: a brief history

The DLP category emerged in the early 1990s, as organisations began to use email and the internet on a widespread basis. IT leaders were concerned about sensitive data being exfiltrated via email, and so security experts embarked on the mission to create DLP – a solution that can redact or block sensitive data from being transferred out of the network without authorization.

For a while, the solution prospered but, as we all know, digitalization is far from stagnant. Over the last ten years, the enterprise has become borderless. Network boundaries are fading in favor of cloud-based tools and applications, and more and more employees are using collaboration tools, SaaS applications and different email providers to stay productive. The network is becoming obsolete, and so the version of DLP that launched in the 1990s, which focuses on protecting data from leaving the perimeter, simply doesn’t cut it anymore.

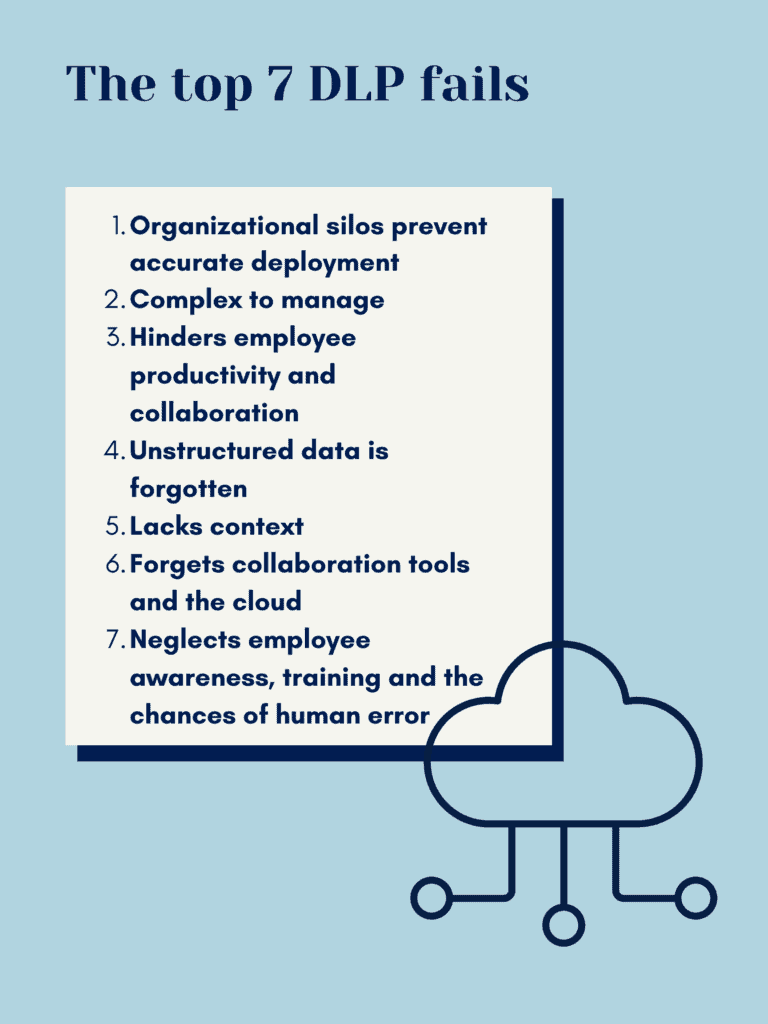

Here’s 7 reasons why.

The 7 legacy DLP fails

1. Organizational silos prevent accurate deployment

DLP is all about protecting sensitive data – but how do you know what data is sensitive and what data isn’t? This is where data classification comes in. It’s a prerequisite to successful DLP, which we’ve written about here. Trouble often arises, though, because the team that classifies data in an enterprise is different to the security team who manage the DLP solution. What this means is that there is often a proverbial brick wall between the team that is protecting data and the team that knows which data to protect.

After all, data is anything but static. Enterprises are generating, sharing and storing vast amounts of data each day. What was sensitive yesterday may not be sensitive today, and what was once low-risk data can quickly become high-risk. Unfortunately, traditional DLP solutions are too slow to keep up with the dynamic pace of modern organizations. Classification can often be a manual, labor-intensive task that takes a lot of time. Within this period, sensitive data could leak or be stolen.

2. Complex to manage

For DLP to be effective, the security team needs to map out all possible data paths and types, and determine who is allowed access to what. This, in itself, is an extremely time consuming task. But it’s also never ending. Employees and job roles are constantly in flux, meaning the status quo for data usage patterns is all dynamic.

Then there’s the fact that communications rarely stick to one channel anymore. Aside from corporate email, employees and contractors now often communicate over collaboration tools, their personal email accounts and even on their phones. Keeping track of all the possible data paths, as they change, is an overwhelming and unrealistic task.

This complexity can lead to the security team overcompensating – making it difficult for employees to transfer data without authorization. Unfortunately, this means that legacy solutions generate a lot of false positives, which add an extra burden to the security team’s workload in the form of constant notifications – also known as “alert fatigue”.

3. Hinders employee productivity and collaboration

As we’ve just touched upon, legacy DLP solutions are often too stringent. They prevent employees from accessing, transferring and receiving data that they should have access to – the kind of data that is essential to getting the job done. This has a knock-on impact in two ways.

Firstly, when employees become frustrated by a solution, they are less likely to champion it. For DLP to work, employees need to buy into it and follow its policies. Secondly, this frustration may lead to employees circumventing the DLP solution altogether. For example, if they can’t send a file by their corporate email, then they’ll use a cloud application instead. Ultimately, employees will not let security trump their productivity – which legacy DLP often does.

4. Unstructured data is forgotten

Legacy DLP solutions work through a process of pattern recognition. They look for sensitive data within structured enterprise data. However, today, most data in the enterprise is unstructured and unregulated. In fact, it’s predicted that by 2024, 80% of organizations’ data will be unstructured. Within this context, traditional DLP simply isn’t useful.

Ideas and IP are constantly being generated, modified and uploaded in all sorts of different formats, and legacy DLP isn’t equipped to keep up with this pace of change. While it might protect structured data, it leaves a whole world of sensitive data untouched and vulnerable to leakage.

5. Lacks context

Traditional DLP solutions are useful for securing obvious types of sensitive data, such as credit card numbers or social security numbers. However, in the age of online crime, sensitive data has become harder to define. What is sensitive for one company may not be sensitive to another, and every organization’s IP will be slightly different.

However, legacy DLP solutions lack the dynamic and contextual awareness of individual enterprises. Sure, it may redact a credit card number from an email, but it won’t necessarily stop a disgruntled employee from sending top secret business information to a competitor.

6. Neglecting employee awareness, training and human error

Traditional DLP solutions are built on hard and fast policies. For example, let’s say an employee is not allowed to send sensitive data to certain email addresses. However, the employee types in someone’s email address manually and includes a mistake. The email is sent and the DLP solution doesn’t stop it, as it doesn’t recognize the “to” address as blocked. This kind of human error – which is common in the workplace – undermines DLP solutions.

It would be better if a solution could integrate dynamically into the workflow – offering employees nudges and reminders before they send emails or messages with sensitive data, to check they’re sure about their action. We’ve written extensively about this idea – which is called nudge theory – here.

7. Forgets collaboration tools and the cloud

We’ve left this one until last – but it’s probably the biggest issue with legacy DLP solutions today. We have to remember that legacy DLP was invented in the 1990s, before smartphones, SaaS tools and remote working were really even a thing. Sure, the solution stops sensitive data from leaving the perimeter – but there isn’t a perimeter anymore.

Most traditional DLP solutions simply aren’t intelligent and vast enough to monitor sensitive data as it travels through the cloud, making cloud applications a huge exit point for sensitive data, which could lead to leaks or even a breach. In fact, IDC estimates that 80% of companies experienced at least one cloud data breach in the last year and a half.

8. Reimagine DLP for the modern enterprise

At this point, you may be thinking – why is a DLP provider slating DLP? Well, to be specific, we’re talking about legacy DLP here – the kind of solutions that are outdated and should stay in the 90s. However, just as enterprises have evolved, so too have security technologies. Now, a new generation of DLP solutions is on the market, designed to tackle the exact failures we mentioned above.

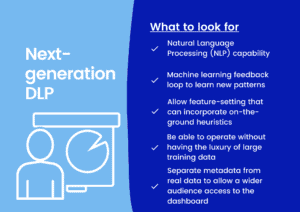

This next-generation of tools is AI-powered, cloud-aware and contextual. It extends data protection outside of the corporate network and directly into SaaS applications, giving security teams much needed control and visibility over how data is being used and stored – no matter where it travels.

Here’s a quick checklist of things to look out for in a next-gen DLP solution:

If you are looking for a DLP solution, check out our DLP for SaaS buyers’ guide.