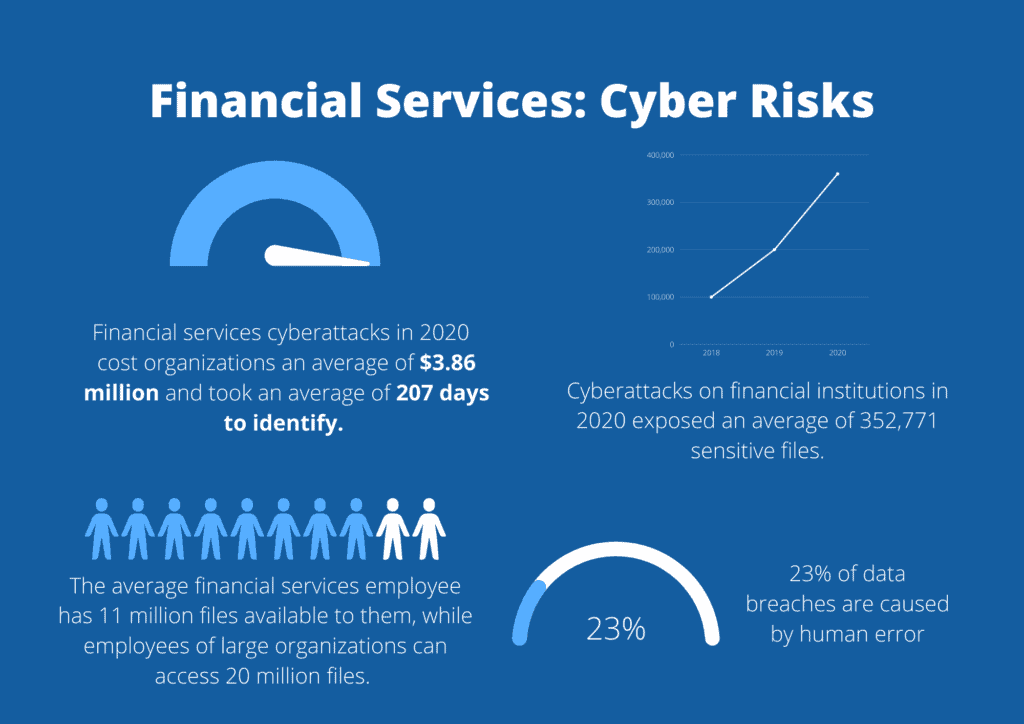

For an industry as highly regulated as financial services, these stats may surprise you:

- Financial services cyberattacks in 2020 due to data breaches cost organizations an average of $3.86 million and took an average of 207 days to identify.

- Cyberattacks on financial institutions in 2020 exposed an average of 352,771 sensitive files.

- The average financial services employee has 11 million files available to them, while employees of large organizations can access 20 million files.

Why isn’t data protection working for financial services?

So, what gives? It’s not that financial institutions aren’t investing in cyber security. According to a survey of 571 community banks in 37 states conducted by the Conference of State Bank Supervisors, more than 70% of respondents ranked cybersecurity as their top concern.

Financial services know data breaches are an issue. They’re just struggling to get protection right.

Unique challenges in financial services

It’s no surprise that the financial services industry is a top target for malicious actors. Financial institutions create, manage and store a goldmine of lucrative personal and financial data: bank account numbers, social security numbers, credit card numbers, merger and acquisition (M&A) data, Intellectual Property (IP)… You name it, and banks have probably got it.

As well as contending with the threat of external actors, financial institutions also need to be aware of insider issues. Financial services, after all, is one of the most competitive industries today, which makes it more vulnerable to insider threats.

These issues and the overwhelming amount of sensitive data that banks process is why it’s one of the most highly regulated industries. GLBA, COPPA, FACTA, HIPAA, CCPA and GDPR are just some of the regulations that banks are subject to.

But meeting compliance demands, and keeping data safe, is proving an ongoing challenge. In 2020, 198 fines were imposed against financial service institutions, up 141% from the year before, with penalties totaling $10.4 billion.

And that’s just the cost of the penalties themselves. Data breaches can have wide-ranging, long-term repercussions – especially for banks. Financial services firms rely on their brand proposition: they’re meant to be beacons of trust and integrity. A data breach, or any symptoms of failure to protect customer data, could undermine a company’s entire proposition.

Why are FS companies failing to protect sensitive data?

Here are the most common reasons for data breaches in the FS industry.

Cybercriminals are investing just as much as FS companies are

We know that FS companies invest in cybersecurity. But we need to remember that cybercriminals are willing to invest too. That is, willing to invest their time, energy and resources in attacking FS infrastructure. For example, the Carbanak and Cobalt malware campaign saw malicious actors steal over $1 billion from 100 financial institutions over five years.

Ransomware is also a potent risk to the sector. VMware saw ransomware attacks up ninefold between February and April 2020. Last year, we also saw the Chilean bank BancoEstado shut down branches after a ransomware attack.

Sprawling supply chains and cloud adoption make security more complex

The SolarWinds attack exemplifies a rising issue across industries: supply chain – or island hopping – attacks. In these scenarios, a hacker will typically infiltrate the network of a small SaaS provider and then move laterally into their clients’ systems and infrastructure – some of which could be FS companies.

SaaS is its issue too. Hybrid work is becoming more and more common. Many FS companies use applications like Slack, Teams, and Zoom to communicate and stay productive while working from home. While this can be great for collaboration, these applications create new data security issues. The cloud is inherently opaque, making it difficult for FS IT teams to keep track of sensitive data as it moves through different endpoints, networks and applications.

That Human Factor

Over 64% of financial service companies have 1,000+ sensitive files accessible to every employee. But FS employees are only human. One accidental click of the wrong button could expose a wealth of trade secrets. This risk is even more pertinent in the WFH world, where employees might be distracted by their families or constant alerts from collaboration apps.

Legacy DLP makes things worse

Most FS companies have a DLP solution in place. They don’t realize that their DLP solution could be counter-productive, especially if it’s a traditional solution. Here’s why:

Reactive rather than proactive

FS companies generate, share, and store vast amounts of data each day. What was sensitive yesterday may not be sensitive today, and what was once low-risk data could quickly become high-risk. Unfortunately, traditional DLP solutions are too slow to keep up with the dynamic pace of modern organizations. Classification can often be a manual, labor-intensive task that takes a lot of time. Within this period, sensitive data could leak or be stolen.

Complexity

For legacy DLP to be effective, the security team must map out all possible data paths and types and determine who is allowed access to what. This, in itself, is a highly time-consuming task. But it’s also never-ending. Employees and job roles are constantly in flux, meaning the status quo for data usage patterns is all dynamic.

Plus, communications rarely stick to one channel anymore. Employees and contractors now often communicate over collaboration tools, personal email accounts, and even their phones. Keeping track of all the possible data paths as they change is an overwhelming and unrealistic task.

This complexity can lead to the security team overcompensating – making it difficult for employees to transfer data without authorization. Unfortunately, this means that legacy solutions generate a lot of false positives, which add an extra burden to the security team’s workload in the form of constant notifications – also known as “alert fatigue”.

Limited coverage

Legacy DLP solutions work through a process of pattern recognition. They look for sensitive data within structured enterprise data. However, today, most data in the enterprise is unstructured and unregulated. In fact, it’s predicted that by 2024, 80% of organizations’ data will be unstructured. Within this context, traditional DLP isn’t practical.

Too static

Traditional DLP solutions are built on hard and fast policies. For example, let’s say an employee is not allowed to send sensitive data to particular email addresses. However, the employee manually types in someone’s email address and includes a mistake. The email is sent, and the DLP solution doesn’t stop it, as it doesn’t recognize the “to” address as blocked. This kind of human error – which is expected in the workplace – undermines DLP solutions.

How cloud-based DLP can help FS companies

As we move towards a new year, now is the perfect time for FS companies to reconsider their DLP strategy and adopt a cloud-based, context-aware, and dynamic solution.

Polymer’s DLP platform uses artificial intelligence and machine learning to discover and protect FS data as it moves through collaboration applications. It extends data protection outside of the corporate network and directly into SaaS applications, giving security teams much needed control and visibility over how data is being used and stored – no matter where it travels.

For example: Routefusion, a high growth cross-border payment SaaS platform used by banks, used Polymer DLP for Slack and Google Drive. The integration has successfully protected the company from both accidental and intentional data leaks. We were able to remove over 97% of all sensitive data elements shared in public chats in real-time while blocking virtually all sensitive files from being shared with unauthorized parties.

“Polymer just worked out of the box. Minimal tweaking of rules with great support was done within 2-3 sessions” – Michael Cramer, Head of Operations at Routefusion.

Learn more about Polymer for financial services today or request further information via this form.