The rise of AI is reshaping the foundations of modern business. In 2021, enterprises spent $40 billion on digital transformation, and they are increasingly turning to AI to drive efficiencies, enhance customer experiences, and gain competitive advantages. However, the challenges of AI integration mirror those experienced during previous (or ongoing) cloud digital transitions.

Lessons from digital transformation journeys of yore

The landscape of digital transformation has always been complex. Historically, these projects took an average of 18-24 months to yield measurable results. Simpler projects, such as the HR department adopting Workday or Salesforce, are possible with a phased-deployment approach. Broad-scope projects, like data warehousing, are intricate and time-consuming endeavors. Central to this complexity is master data management (MDM). MDM is a technology-enabled discipline in which business and IT work together to ensure the uniformity, accuracy, stewardship, semantic consistency and accountability of the enterprise’s official shared master data assets. Let’s dig in.

Overpromise of master data management (MDM) programs

Though promising on paper, MDM often stumbles in reality. However, the outcome of a successful MDM program is massive. MDM programs can:

- Improve data quality: MDM programs can help improve the quality of the data by identifying and correcting errors, inconsistencies, and redundancies.

- Increase data accessibility: MDM programs can make it easier for users to access the data they need, when they need it. This is because MDM programs create a single, unified view of the data.

- Enhance data security: MDM programs can enhance data security by implementing data encryption, access control, and auditing procedures.

- Provide better business insights: MDM programs can help organizations gain better business insights by providing them with a more complete and accurate view of their data. This might lead organizations to make better decisions about their business.

However, a Gartner survey highlighted that 85% of all MDM projects failed to deliver on their projected ROI. How do past MDM failures translate to current AI initiatives? First, it’s important to understand why they failed.

Why do MDM programs fail?

Below are the top five reasons MDM programs fail.

- Data sprawl: The average enterprise has data scattered across 5 to 7 different systems, which complicates unified management.

- Legacy systems: Legacy systems with diverse data schemas are a significant integration challenge.

- Unstructured data: A study revealed that 80% of an enterprise’s data is unstructured. Without advanced NLP, extracting valuable insights from unstructured data is difficult or impossible.

- Data de-duplication: Even with sophisticated tools, achieving more than 70% accuracy in data de-duplication is an uphill battle.

- Sensitive data: With the average enterprise handling terabytes of data, pinpointing and managing sensitive data is onerous.

Understanding why MDM programs fail is important when thinking about implementing AI in the enterprise. Achieving some level of MDM competency is necessary for a successful outcome.

Data issues for an AI deployment

When considering an AI initiative for your organization, learn from past MDM failures.

- Define the use case: Be it streamlining HR processes or deploying a customer service chatbot, ensure your objectives are crystal clear.

- Understand data requirements: A study from McKinsey found that businesses could unlock $3-5 trillion in value by using AI to analyze the vast datasets they possess. Take time to review and comprehend your data.

- Operationalize data flow: Ensure your data flow is seamless as that’s the backbone of any successful AI transformation.

- De-duplicate data: Further refine your dataset by eliminating duplicates.

- Address sensitive data: 23% of an organization’s data could be sensitive. Understand what data is sensitive to your organization and manage it appropriately.

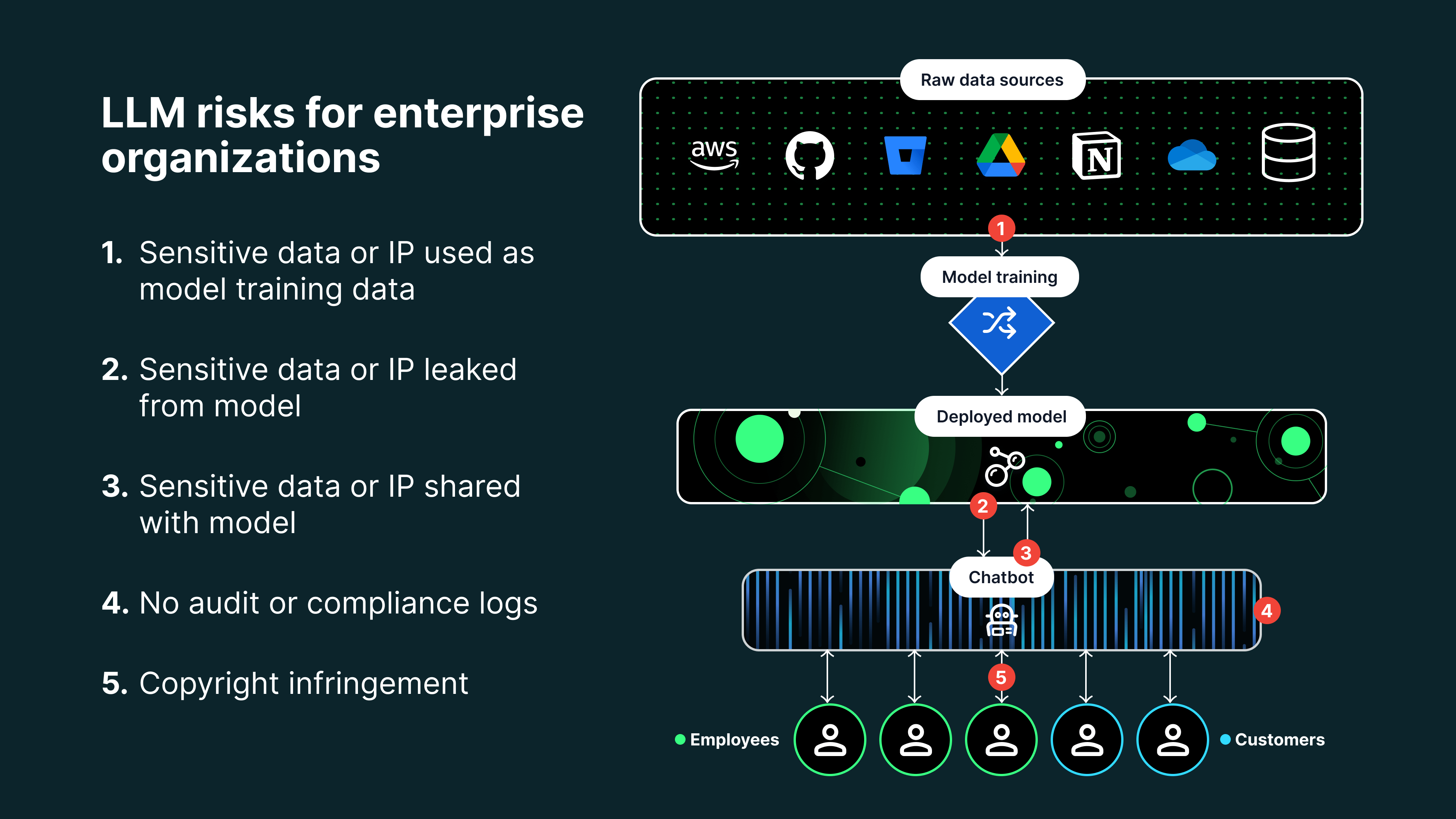

Tackling LLM data leakage risk at the training data level is costly (maybe even impossible)

AI transformation has many data-related challenges even for organizations with MDM competency. According to a Deloitte survey, 56% of enterprises expressed concerns about AI data quality and accuracy. Balancing the removal of sensitive data, like PII or PHI, and ensuring an AI model’s efficacy is a major conundrum. There’s also the lurking risk of data poisoning which can derail AI initiatives.

Realistically, no matter where an organization stands in its MDM journey, AI deployments remain vulnerable. Training models with pristine datasets is a costly and time-intensive task. On top of that, any trained or guardrail dataset is still at risk from clever prompt engineering.

DLP for AI chatbots: Last line of data security defense

Data loss prevention (DLP) has emerged as the cornerstone for AI chatbot security. DLP for AI can help safeguard your organization’s data with features such as:

- Real-time monitoring: Continuous surveillance during interaction, which is vital to prevent data breaches

- Real-time redaction: Blocking sensitive data from being input by the user or displayed to the user

- Feedback loop: Obtaining user feedback to refine AI models, improving the models’ relevance and efficacy.

- Dynamic governance: Adaptable and evolving governance models because AI is not a static field.

Polymer DLP for AI is an easy plug-in into enterprise chatbots that audits and stops sensitive data and intellectual property data loss. If you want to learn more about the Polymer solution, please reach out for a demo.